Artificial intelligence (AI) seeks to replicate human cognitive abilities, with researchers exploring whether machines could one day achieve consciousness—a trait long considered uniquely human or shared by advanced animals. Yet, defining consciousness remains elusive, fueling debate among scientists about its potential in machines. The paradox of 'artificial consciousness' challenges experts: where do current advancements stand?

At its core, consciousness derives from the Latin 'cum scientia'—knowing with oneself—implying self-awareness and separation from one's own perspective, often tied to embodiment and morality, which excludes robots for many thinkers.

René Descartes' famous 'I think, therefore I am' underscores this anthropocentric view. Philosopher André Comte-Sponville calls it 'one of the most difficult words to define,' highlighting the challenge of a self-defining consciousness.

Pioneers like Alan Turing and John von Neumann foresaw machines mimicking the brain fully, including consciousness. Philosopher Daniel Dennett from Tufts University argues a rigorously applied Turing Test—where a machine convinces a human interrogator of its awareness—proves it possible.

To assess machine consciousness, we must first understand it in humans. In a 2022 study, three neuroscientists proposed 'consciousness' covers two brain processes: C1 (global information broadcast for flexible use and reportability) and C2 (self-monitoring for subjective certainty or error).

C1 is transitive consciousness, linking a system to thought objects like 'light,' keeping info available. C2 adds metacognition—self-monitoring of processes, enabling introspection on body position, knowledge, or errors.

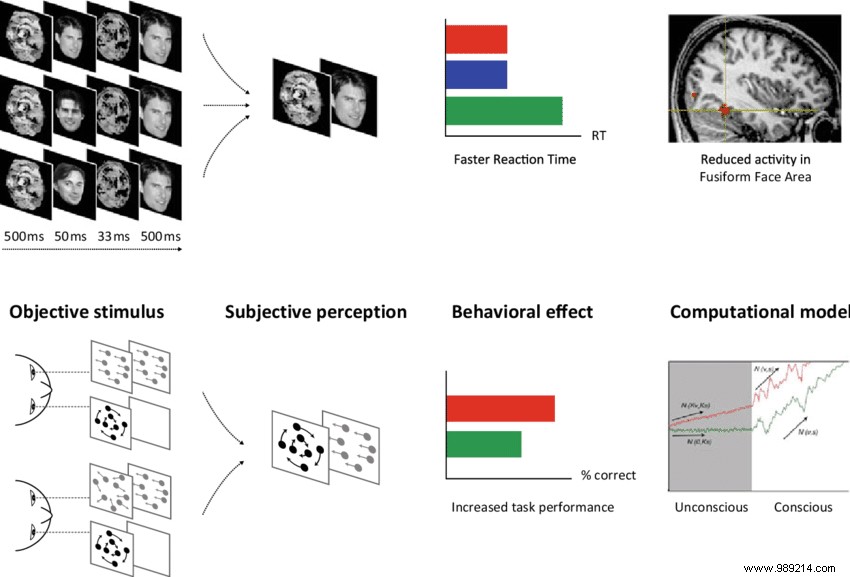

Experiments using subliminal stimuli and neuroimaging show most brain areas activate unconsciously (C0), like invariant face recognition where prior exposure speeds processing without awareness.

A 'strong' AI—self-learning, versatile, self-evaluating—requires C1+C2. Current machine learning lacks this self-monitoring, ignoring knowledge limits or differing viewpoints. 'Despite recent successes, current machines primarily mirror unconscious (C0) human processing,' the researchers conclude.

Most experts recognize 'weak' AI today, suggesting machine consciousness differs from human forms.

Though brain and algorithms differ, neurobiology-inspired machine learning now rivals humans in tasks like translation. Could this herald consciousness?

'Artificial consciousness can advance by studying human brain architectures and transferring insights to algorithms,' note the neuroscientists—though not evoking full human-like awareness.

Examples abound: PathNet uses genetic algorithms for task-optimal neural paths, showing flexible, generalizing performance akin to primate cognition.

In 2021, Chinese researchers built an AI prosecutor, 97% accurate for eight common Shanghai crimes (though some deem it insufficient).

Recently, OpenAI's Ilya Sutskever suggested large neural networks may be 'mildly self-aware,' predicting 'astronomical societal impact' from smarter systems in 10-15 years.

Yet, powerful AI risks misuse or dominance, potentially sidelining humans as we did animals.

After 7 million years of evolution, human consciousness—with self-reflection and emotions—won't soon be replicated by algorithms. Still, an advanced, machine-specific 'consciousness' may emerge.