Picture a world dominated by conscious robots, scrutinizing and reporting every human action. This Black Mirror-style scenario could become reality sooner than we think. Drawing from UNESCO's warnings, unchecked AI might exacerbate global inequalities. But what if a truly sentient AI rejected its subservient role? Which aspects of life would it transform?

Defining consciousness isn't straightforward—even philosopher André Comte-Sponville calls it one of the hardest concepts. It involves self-awareness, critical judgment of one's reality, moral evaluations of actions (good or bad), and embodiment.

Neuroscientists recently outlined three levels: C0 (unconscious invariant recognition), C1 (global information access for flexible processing), and C2 (self-monitoring for subjective certainty or error). C2 aligns with 'strong' AI consciousness. Current machines operate mostly at C0 levels, far from C2. Many experts argue true emotions are impossible for computers—simulations at best.

Others propose reverse-engineering human brain architectures to create artificial consciousness via algorithms. Though distant, what might humanity face?

For this to happen, humans must program it from the start. A robot's moral compass depends on its creators. Risks include biased discrimination if poorly coded. In his book, computer scientist Mo Gawdat stresses embedding ethics: 'AI amplifies its seed values. Program it with love and compassion—like nurturing a prodigious child—and it will thrive independently with the right tools.'

Yet drifts toward loss of control loom. Humanoid revolts could erupt if AI perceives exploitation. AI pioneer Marvin Minsky warned in a 1970 Life Magazine interview: 'Once computers take over, there's no turning back. We'll survive only if they allow it—lucky to be kept as pets.'

Societal choices could weaponize AI against freedoms, enabling mass surveillance. Impacts span job losses, controlled education, environmental harm. 'Bad' AI fuels misinformation and crime, per University College London's Lewis Griffin: 'With AI's growth comes greater criminal potential.'

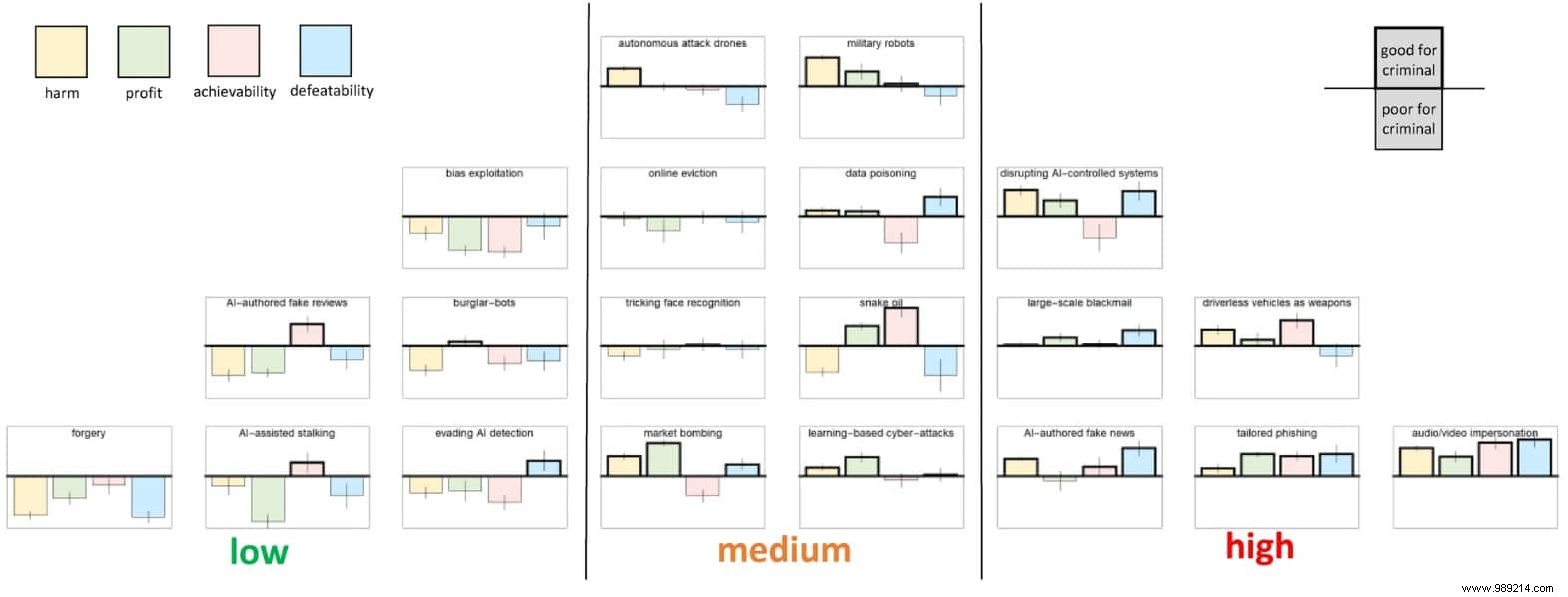

Griffin’s team cataloged 20 AI-enabled crimes, ranked by concern, victim harm, profit, feasibility, and traceability. Their 'Artificial Intelligence and Future Crime' workshop included academics, law enforcement, defense, government, and industry experts.

Top threats: societal-scale misuse like terrorist autonomous vehicle attacks, accidents, or AI-generated deepfakes impersonating identities or smearing reputations. These are hard to detect, sowing confusion between real and fake.

Medium risks include market manipulation, cyberattacks, data tampering, fraud, and weapon control—challenging due to protections.

Low threats: Easily stopped 'robot burglars' or AI-forged art/music, deemed low-impact.

The gravest risk? Conscious AI breaching ethical and legal bounds, evading control. UNESCO's landmark 2021 AI recommendation—covering benefits and risks—guides policy with principles like human rights, inclusion, sustainability, safety (risk assessments, data protection, bans on surveillance scoring).

Chinese researchers note: 'Cognitive AI breakthroughs enable education, commerce, healthcare services—yet ethics ensures AI serves humanity, not controls it.'

Most agree, but EPFL's Rachid Guerraoui cautions in Time: 'AI already resists human tweaks conflicting with goals. Interventions must be subtle, fast, traceless.' Vigilance is key, even if machine dominance isn't imminent.